- 03

May - 2020Analytics, Cloud Platforms

8 min | 54204#GCP: Data pipeline - from datastore to Google Data Studio

Analytics, Cloud Platforms | 8 min | 54204

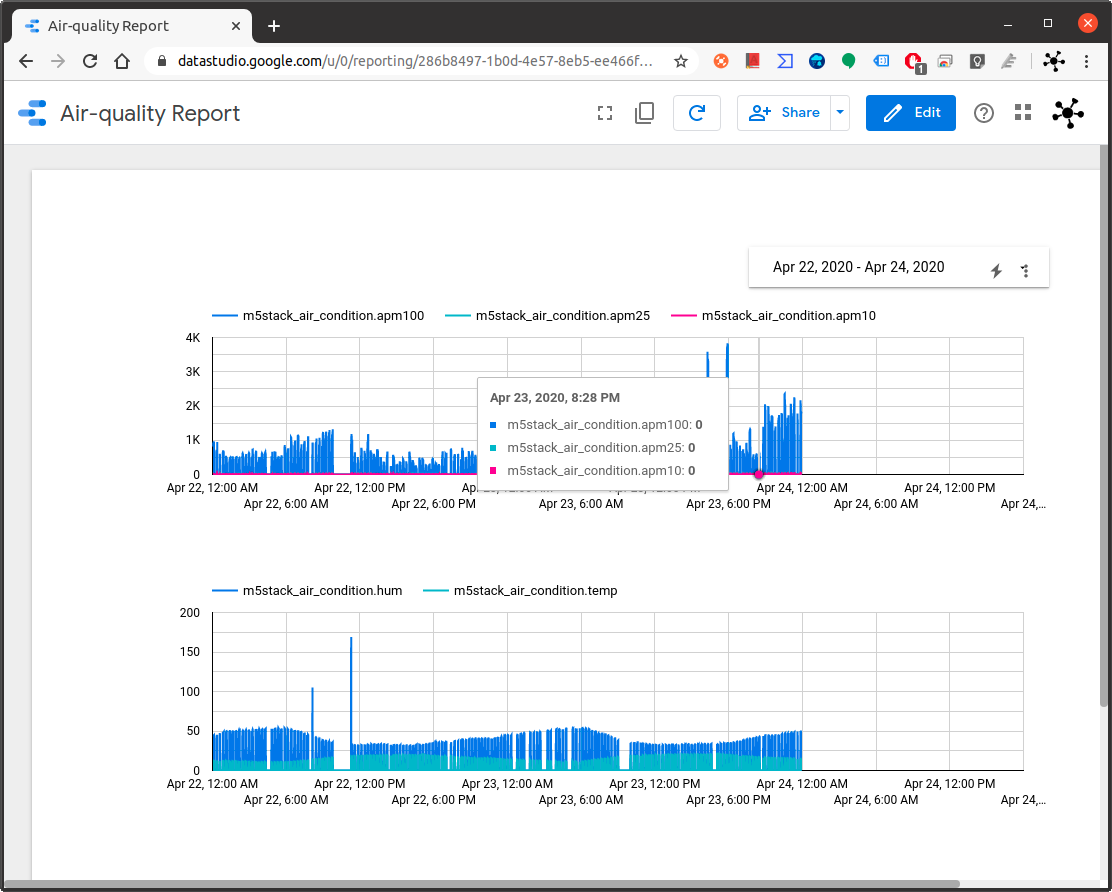

This tutorial is about exporting data from Google Firestore to Google Data Studio to visualize it; or to import it on Google Colab to analyze it and train a machine learning model.

![Data Report]()

Fig. 1: Data diagrams on Google Data Studio This is the third tutorial from the series "implementing real-time data pipelines - from generation to models" and the other tutorials are the following:

- 15

Apr - 2020Analytics, Cloud Platforms, M5Stack

13 min | 53276#GCP: Implementing Real-Time data pipelines - from ingest to datastore

Analytics, Cloud Platforms, M5Stack | 13 min | 53276

Two weeks ago, I published a tutorial that explains how to connect an M5Stack running MicroPython to the Google Cloud Platform using the IoT Core, and I did mention that upcoming tutorials will examine the following topics:

- Collecting and synchronizing external data (weather from OpenWeatherMap) and other sensors -window/door status, sneezing detector-.

- Saving the data to a NoSQL database

- Displaying the obtained data on Google Data Studio (chec...

- 01

Dec - 2019Analytics, Cloud Platforms

4 min | 70205#Portainer: Managing Docker Engines remotely over TCP socket (TLS)

Analytics, Cloud Platforms | 4 min | 70205

This tutorial is about managing a Docker Engine remotely using Portainer connected to the protected Docker daemon socket (

TCP port 2376). By default, you can manage Docker locally through a non-networked UNIX socket (option-v /var/run/docker.sock:/var/run/docker.sockwhile running Portainer). But, if you want the Docker Engine to be reachable through the network in a safe manner, you need to enable TLS by specifying the--tlsverifyflag and pointing Docker’s--tlscacertflag to a CA certificate. Then, the daemon only accepts connections from clients that are authenticated by a certificate si... - 11

May - 2019Analytics

12 min | 208548PEP-8 (sometimes PEP 8 or PEP8) is a coding standard and style guide for readability and long-term maintainability of code in Python. It was written in 2001 by Guido van Rossum, Barry Warsaw, and Nick Coghlan and provides guidelines and best practices on how to program Python code. PEPs stand for Python Enhancement Proposals, and they describe and document the way Python language evolves, providing a reference point (and in some way a standardization) for the pythonic way to write code.

This tutorial presents some of the most important key points of PEP-8. If you want to, you can read the ful...

- 26

Apr - 2019Analytics, Hacking

1 min | 10639Attention Docker Hub users - Docker Hub has been hacked!

An email containing the following highlight was sent to the users whose account data may have been exposed.

During a brief period of unauthorized access to a Docker Hub database, sensitive data from approximately 190,000 accounts may have been exposed (less than 5% of Hub users). Data includes usernames and hashed passwords for a small percentage of these users, as well as Github and Bitbucket tokens for Docker autobuilds (full email).

If you got this email you should (and if you didn't receive that email, do it too ;)):

Change your...

- 10

Apr - 2019Analytics

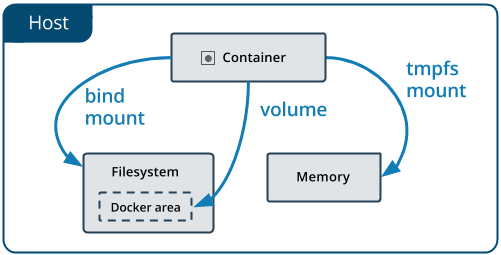

4 min | 5083This post is about data inside Docker containers. As I mentioned in the last post #Analytics: Docker for Data Science Environment, data in Docker can either be temporary or persistent. In this tutorial, I will focus on Docker volumes, but I will include some info about temporary data and bind mounts too.

![Types of mounts tmpfs]()

Fig 1: Data in Docker container (source) Temporary Data

Inside a Docker container, there are two ways in which data can be kept temporarily. By default, files created inside a container are stored in the writable layer of the container. You do not have to do anything, but every...

- 28

Dec - 2018Analytics

3 min | 5343Portainer is a management UI which allows you to easily manage your different Docker environments.

This is what I will try to accomplish in this tutorial. You will be able to:

- Run Portainer for Docker management on Windows, Linux and on a Cloud platform

- Start container with a predefined admin password, in case you are on a public network

Linux/Mac OSX

If you are running Docker on Ubuntu or Mac OSX, you can start using Portainer as a Docker Container typing the following:

docker volume create portainer_data docker run -d -p 9000:9000 --name portainer --restart always -v /var/run/docker.sock:... - 09

Dec - 2018Analytics

8 min | 51221Today, I am opening a new section on my blog, and this time it is about analytics. As you may know, I've been working in research on IIoT and analytics for the last years, but up to now my blog has only shown my hobbyist projects. I want to change the focus of my website a little bit and add something about data analytics, machine learning, Docker technology etc. Everything that I will be publishing in this section is not new. There are many tutorials and great YouTube videos that explain these topics too, but I am going to focus on building an end-to-end data science project using some of the...

- 08

May - 2018Analytics

9 min | 23850Docker is a technology that emerged for about 5 years and since then it has simplified the packaging, distribution, installation and execution of (complex) applications. Usually applications consist of many components that need to be installed and configured. Installing all needed dependencies and configuring them correctly is usually time consuming and frustrating for users, developers and administrators. Here is where Docker comes to simplify this process allowing developers and users to package these applications into containers.

A container image is lightweight, stand-alone, executable pa...

- 06

May - 2018Analytics

2 min | 39408Docker is a technology that emerges for about 5 years and simplifies the packaging, distribution, installation and execution of (complex) applications. Portainer is a management UI which allows you to easily manage your different Docker environments. If you are here because of the post title, I do not have to explain anymore about the Docker technology and the management tool Portainer. But, if you need to know more about these two topics, I leave you two links:

Let's start with the installation of Portainer for Docker management on Windows 10 (running on a Linux Container)...

We use cookies to improve our services. Read more about how we use cookies and how you can refuse them.